The CISO’s Guide to Agentic AI Security

.

Every CISO I talk to in 2026 has the same blind spot. They’ve spent two years building AI security strategy around “LLMs in the enterprise”, DLP for prompts, acceptable use policies, a bake-off between ChatGPT Enterprise and Copilot. Then a product team quietly shipped an agentic tool. Now there’s an autonomous thing in their infrastructure that takes actions, calls APIs, reads files, writes to systems, and occasionally calls a second LLM to help it decide what to do next. None of the LLM controls apply. And nobody’s sure whose problem it is.

This is the agentic AI security gap. It’s not a new category of threat, it’s a new category of system, and the controls we built for chatbots don’t survive contact with it. This guide is a framework for CISOs trying to close that gap without stalling the business. We’ll cover what actually changes when you move from generative to agentic AI, the four new attack surfaces your threat model has to absorb, which controls meaningfully reduce risk versus which ones waste budget, and a concrete 90-day plan for getting your organization to defensible ground.

Why agentic AI breaks the traditional security stack

A generative AI system takes input and returns output. A user asks ChatGPT a question, it answers. You can treat the whole thing like a pipe: inspect what goes in (DLP on prompts), inspect what comes out (content filters on responses), and govern the people using it (acceptable use policy, training).

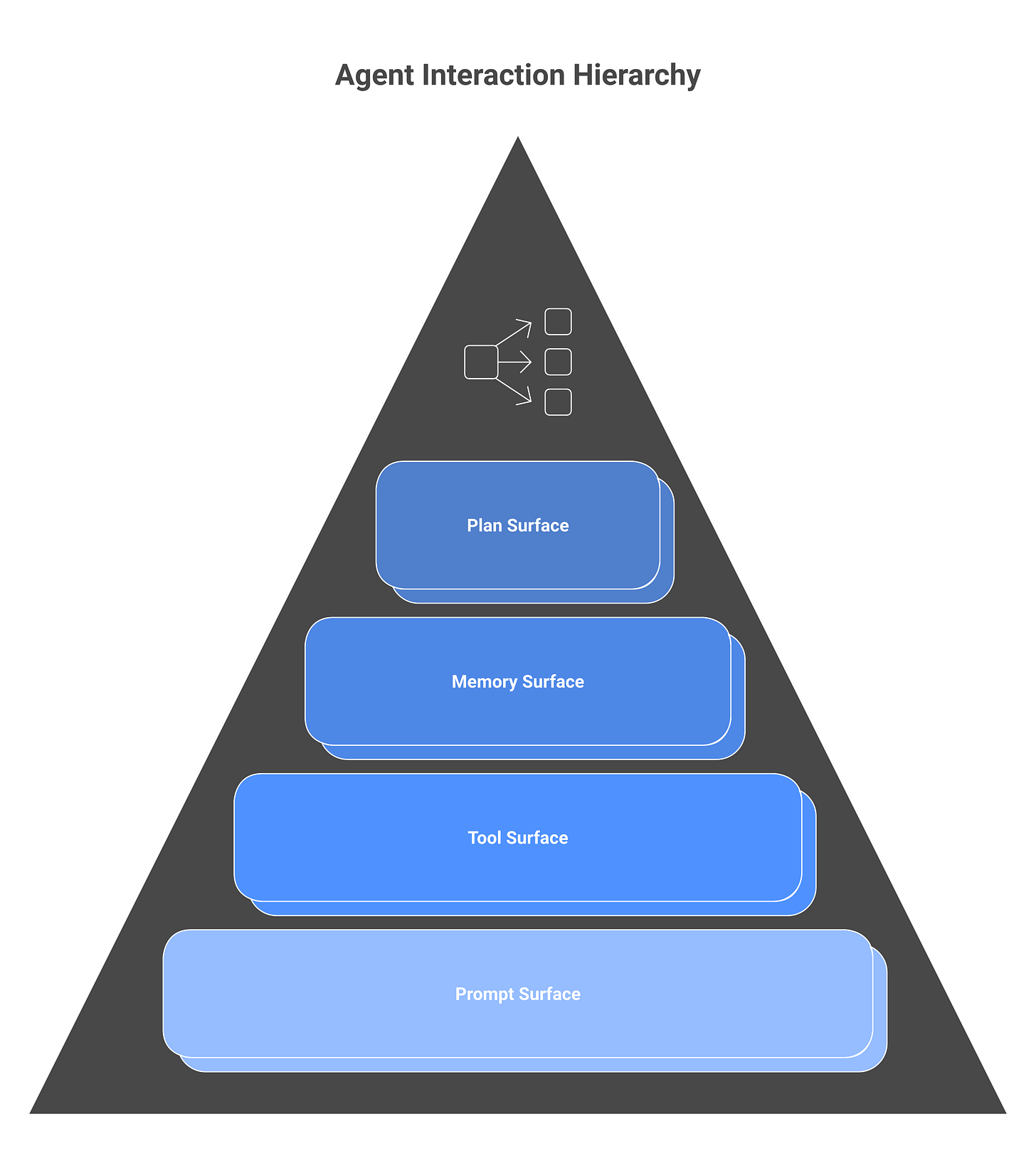

Agentic AI is not a pipe. It’s a loop. An agent receives a goal, decides what steps to take, calls tools to take those steps, observes the results, updates its plan, and keeps going until it decides it’s done. At each step it might invoke another model, another agent, an API, a browser, a database, or a filesystem. The “prompt” is now a program. The “response” is a trail of actions with real-world side effects.

Three things break the moment you introduce this loop:

Your perimeter assumption breaks. Traditional AI security assumes LLM calls happen at well-defined chokepoints, a ChatGPT Enterprise tenant, a Copilot license, an API gateway in front of your own LLM. Agentic systems make LLM calls from everywhere: inside tool handlers, inside sub-agents, inside recursive planning steps. A single user query can fan out into dozens of LLM calls across multiple providers in multiple jurisdictions. Chokepoint-based controls don’t cover this.

Your identity assumption breaks. Your IAM was built for humans, and then patched to accommodate service accounts. Agents are neither. An agent acting on behalf of a user isn’t the user, it has different risk, different rate limits, different failure modes. An agent acting autonomously in a background job isn’t a service account, its behavior is non-deterministic and depends on model outputs. We cover this in depth in our AI agent identity guide, but the short version: your existing IAM can’t answer “who took this action” for agent-mediated events.

Your auditability assumption breaks. A SIEM can ingest logs from your SaaS stack and correlate who did what, when. An agent took an action “because the model decided to”, which means the causal chain for any given event includes the model’s training data, the prompt, the tools available, the order results came back in, and the non-determinism of the model’s output. Root-cause analysis for agent incidents is not the same discipline as IR for normal systems. The tooling is nascent, the skills are rare, and most SOC playbooks have no entry for “the agent did something unexpected.”

None of these are solved by a vendor buying you a new dashboard. They require rethinking the control surface.

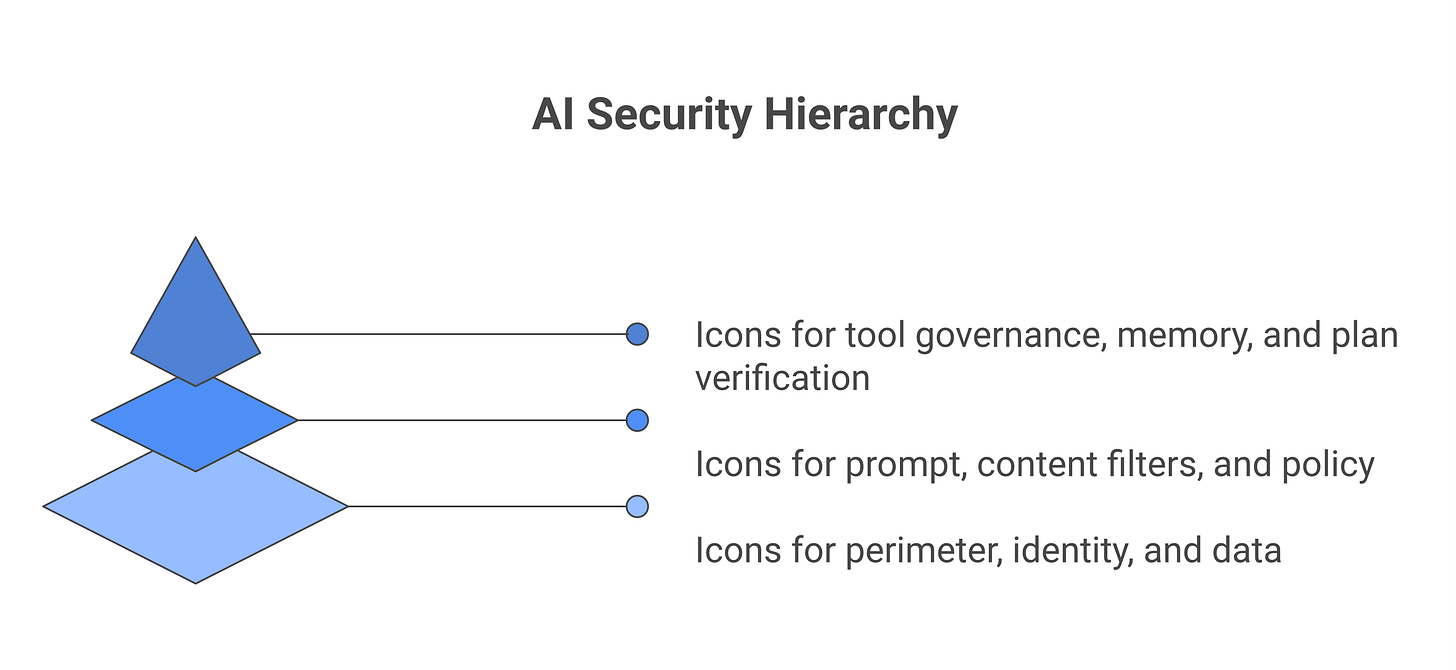

The four new attack surfaces

Every agent has four attack surfaces that don’t exist, or exist very differently, in non-agentic AI systems. Your threat model needs to treat them explicitly.

1. The prompt surface. This includes every input to every LLM call the agent makes, user queries, tool outputs, document contents, retrieved context, and internal reasoning steps. The threat category here is prompt injection, including the indirect variants where attacker-controlled content flows into the prompt via a tool output or a document the agent reads. If the agent reads an email, the email is a prompt. If it reads a Slack message, that’s a prompt. If it browses the web, every page is a prompt. This surface is larger than most teams realize.

2. The tool surface. Every tool or API the agent can call is an attack surface. If the agent has shell access, shell is the surface. If it has read/write access to Salesforce, Salesforce is the surface. The threat isn’t just “the tool gets abused”, it’s “the tool gets called in combinations the designer didn’t anticipate, with arguments the model generated, based on context the attacker influenced.” MCP security is a subset of this surface, but every tool protocol (function-calling APIs, custom integrations, plugin ecosystems) has equivalent risk.

3. The memory surface. Agentic systems store state, conversation history, long-term memory, vector databases of past interactions, cached retrieval results. This memory becomes a persistence mechanism for attacks. If an attacker poisons an agent’s memory in one session, the poison persists into future sessions. This is the agentic equivalent of stored XSS: a one-shot attack that keeps paying out.

4. The plan surface. Agents that plan multi-step actions have a reasoning trace, an explicit or implicit sequence of steps it intends to take. Adversarial inputs can corrupt the plan at any point: get the agent to skip a verification step, escalate privileges under the justification of “the task requires it,” or take an irreversible action before a human can intervene. Defenses against plan-level attacks are still being invented.

Every agentic system deployment should have a threat model that walks all four surfaces. Not as a compliance exercise, as a pre-mortem. If you can’t describe how each surface is being defended, you haven’t threat-modeled the system; you’ve rubber-stamped it.

A CISO’s threat model for agentic systems

The MITRE ATLAS project and OWASP’s LLM Top 10 both provide useful tactical taxonomies, but neither gives a CISO a threat model organized around decisions. Here’s the model I use.

Decision 1: What is this agent allowed to do? Not a policy question, an architectural question. For every agent in your estate, document the blast radius of a complete compromise. If this agent were fully controlled by an attacker for 30 minutes, what’s the worst outcome? This is your starting risk, before any controls. If the answer to “worst outcome” is “trivial”, the agent summarizes text and does nothing else, your controls can be minimal. If the answer is “exfiltrates the customer database,” the control requirement is different by orders of magnitude.

Decision 2: Who or what can influence this agent’s context? For every agent, enumerate every source of untrusted input that can end up in its prompt. Email content? Customer support tickets? Web pages the agent browses? Documents from partners? Salesforce records edited by sales reps? All of these are injection vectors if the agent’s prompt ingests them. The more untrusted sources feed the prompt, the higher the prompt surface risk.

Decision 3: Which of this agent’s tools have irreversible effects? Reversibility is the single strongest lever in agent security. An agent that can read anything but only write via human-approved actions has dramatically less risk than one that writes autonomously. For each agent, list every tool and classify: reversible, reversible-with-effort, irreversible. Every irreversible tool is a place you need stronger controls, typically a human-in-the-loop approval or a hard allow-list.

Decision 4: What does this agent remember, and for how long? Memory is persistence. Document what state the agent retains across sessions and how that state can be inspected, edited, or wiped by a human. Memory without a “flush” capability is a liability.

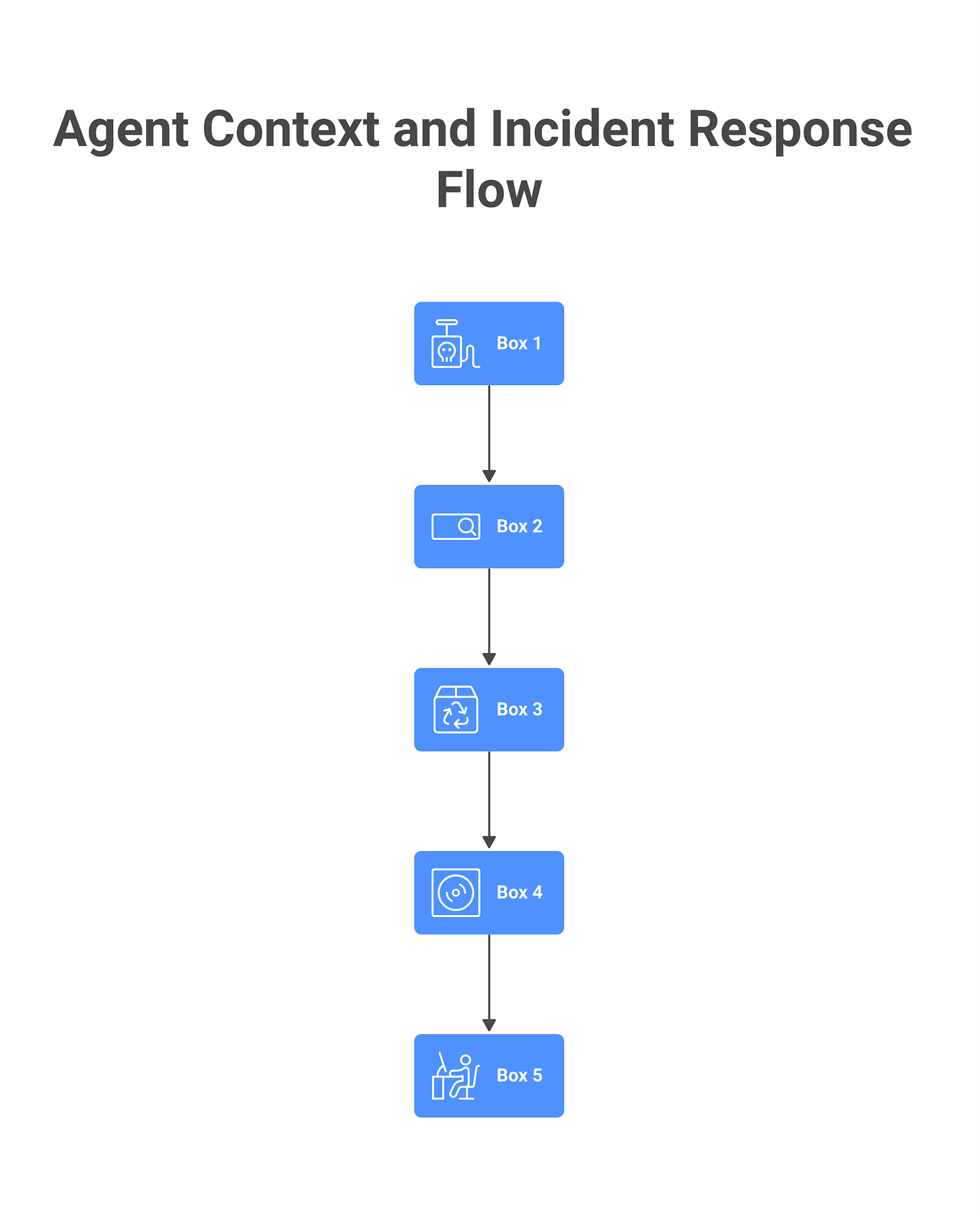

Decision 5: Who owns incident response for this agent? If this agent behaves unexpectedly tomorrow, who gets paged? Who has the authority to shut it off? This sounds trivial and is almost never answered correctly in practice. The SOC doesn’t want to own it because they don’t understand it. The AI team doesn’t want to own it because they don’t do IR. The result, predictably, is that nothing gets owned.

Every agent deployment in your estate should have answers to all five decisions documented, reviewed, and filed somewhere the CISO’s office can retrieve. No answers, no deployment. It is cheaper to kill an agent deployment at this stage than to add controls after.

The governance gap: why your existing AI policy doesn’t cover agents

Most enterprise AI policies in 2026 were written with three things in mind: (1) don’t paste customer data into ChatGPT, (2) disclose AI use in deliverables, (3) route new AI tool adoption through IT. None of the three cover agents.

“Don’t paste data into AI” assumes a human is the one doing the pasting. With agents, the agent reads the data and calls the LLM. There’s no human pasting. The policy needs to govern what data can be in the context an agent operates in, not what a human types into a chatbot.

“Disclose AI use” assumes a discrete moment of AI use to disclose. An agentic pipeline might invoke models 30 times across a 10-minute task. The policy needs to govern disclosure at the workflow level, not the call level.

“Route new AI tools through IT” assumes AI tools are purchased. An agent can be spun up by a developer in 20 minutes with an API key and a local script. There is no procurement event to intercept. The policy needs to govern agent creation by builders, not just agent purchase by buyers.

This is what I mean by the governance gap. Your AI policy, however thoughtfully written, was written for a world where AI was a product people used. Agents are AI you build, or AI that third parties ship into your environment inside other products. The policy surface shifted underneath the ink.

Closing the gap doesn’t mean rewriting the AI policy from scratch; it means layering an agent policy on top. A working agent policy answers: Who can create agents? What data classifications can agents access? What tools require approval before agent integration? What’s the agent-level logging requirement? How does an agent get retired? I unpack the full program build in building an AI security program, but the key move is separating agent policy from AI policy. They’re related, not the same.

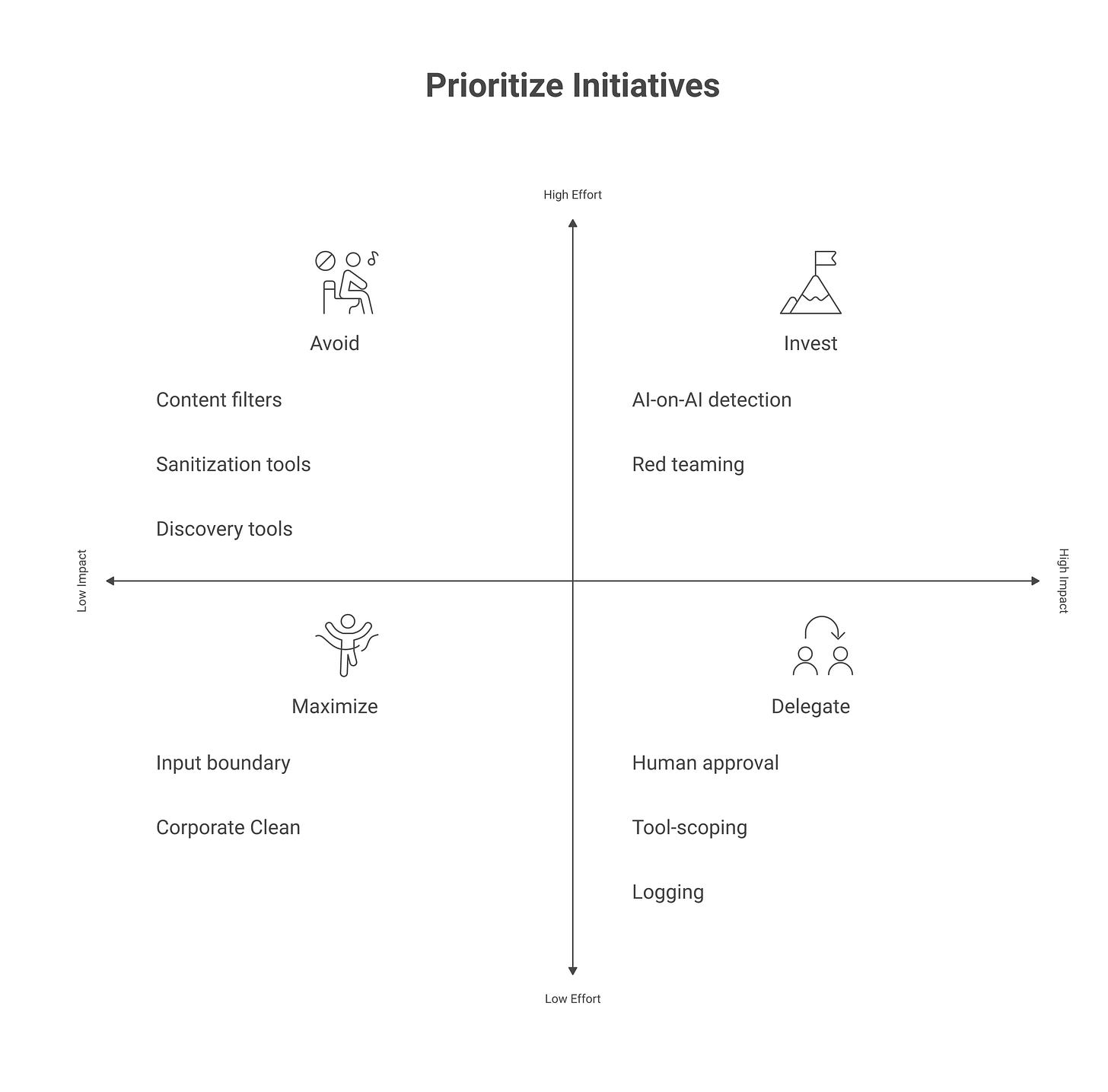

Controls that reduce risk (and what wastes budget)

Budget is finite. Here are the controls that, in my experience across more than a dozen enterprise engagements, actually reduce agentic AI risk. And a shorter list of things vendors will push that don’t.

What works

Human-in-the-loop gates on irreversible actions. If an agent can send money, delete data, or publish content externally, require a human confirmation step. This is unglamorous, limits throughput, and is the single highest-ROI control. Most agentic disasters are averted by a confirmation prompt.

Tool-scoping and least-privilege. Every agent gets the minimum tool access needed for its job, and no more. This is IAM hygiene applied to tools. It sounds obvious; almost no one does it rigorously.

Prompt/tool output logging and retention. Log every LLM call the agent makes, every tool call it makes, every result it got, and keep them for at least 90 days. You don’t know what you’ll need to investigate until something happens. This is the minimum for agent observability, you can’t IR what you can’t see.

Input boundary enforcement. Define the classes of input that can flow into an agent’s prompt and enforce it at the ingest point. If “customer support ticket text” is allowed but “raw inbound email” is not, your ingestion code enforces that separation. This is prompt injection defense in depth.

AI red teaming, quarterly, scoped to actual agents in production. Not abstract LLM red teaming, your agents, your tools, your data, your environment. See AI red teaming methodology for how to scope this.

What wastes money

LLM content filters as a primary control. Filters catch obvious bad outputs. Motivated attackers route around them. They are defense in depth, not a primary control. If a vendor’s pitch centers on filters, push back.

Prompt-sanitization tools that claim to prevent injection. No such tool reliably prevents prompt injection. They’re marketing. Real defense is architectural: limiting what an agent can do with a compromised prompt, not trying to make the prompt un-compromisable.

“Shadow AI discovery” tools for agent discovery. Current discovery tools find SaaS AI usage (ChatGPT, Claude, Gemini) by traffic inspection. They do not find agents your developers built. Don’t expect a discovery tool to solve your agent inventory problem, see shadow AI for what these tools actually do.

AI-powered threat detection on top of agent logs. This is the most 2026 thing imaginable: using AI to watch your AI. There’s a real product category here eventually, but as of today the signal-to-noise ratio isn’t worth the money. Rules-based detection on well-structured agent logs is more useful and cheaper.

How to evaluate agentic AI vendors in 2026

When a vendor brings you an agentic product, these are the five questions that separate the ready from the not-ready.

“Walk me through the full sequence of LLM and tool calls for a typical user request. Who sees what data?” A vendor who can’t answer this has not mapped their own system.

“What happens if the user’s query contains a prompt injection that tries to redirect the agent?” You’re looking for an architectural answer (the agent’s tools are scoped, irreversible actions require confirmation), not a filter answer.

“Show me the last agent-level incident in your platform and the post-mortem.” Every real agentic product has had one. Vendors who claim they haven’t are lying or haven’t been in production long enough. The quality of the post-mortem tells you the quality of the security program.

“What audit logs do we get, at what retention, and can we export them to our SIEM?” If the answer is “you can see some logs in the dashboard,” the product isn’t enterprise-ready.

“What’s your plan if a customer reports data leakage via your agent?” The vendor should have an answer that references specific tooling (a way to audit the specific agent session, replay it, identify affected data). No plan means no product.

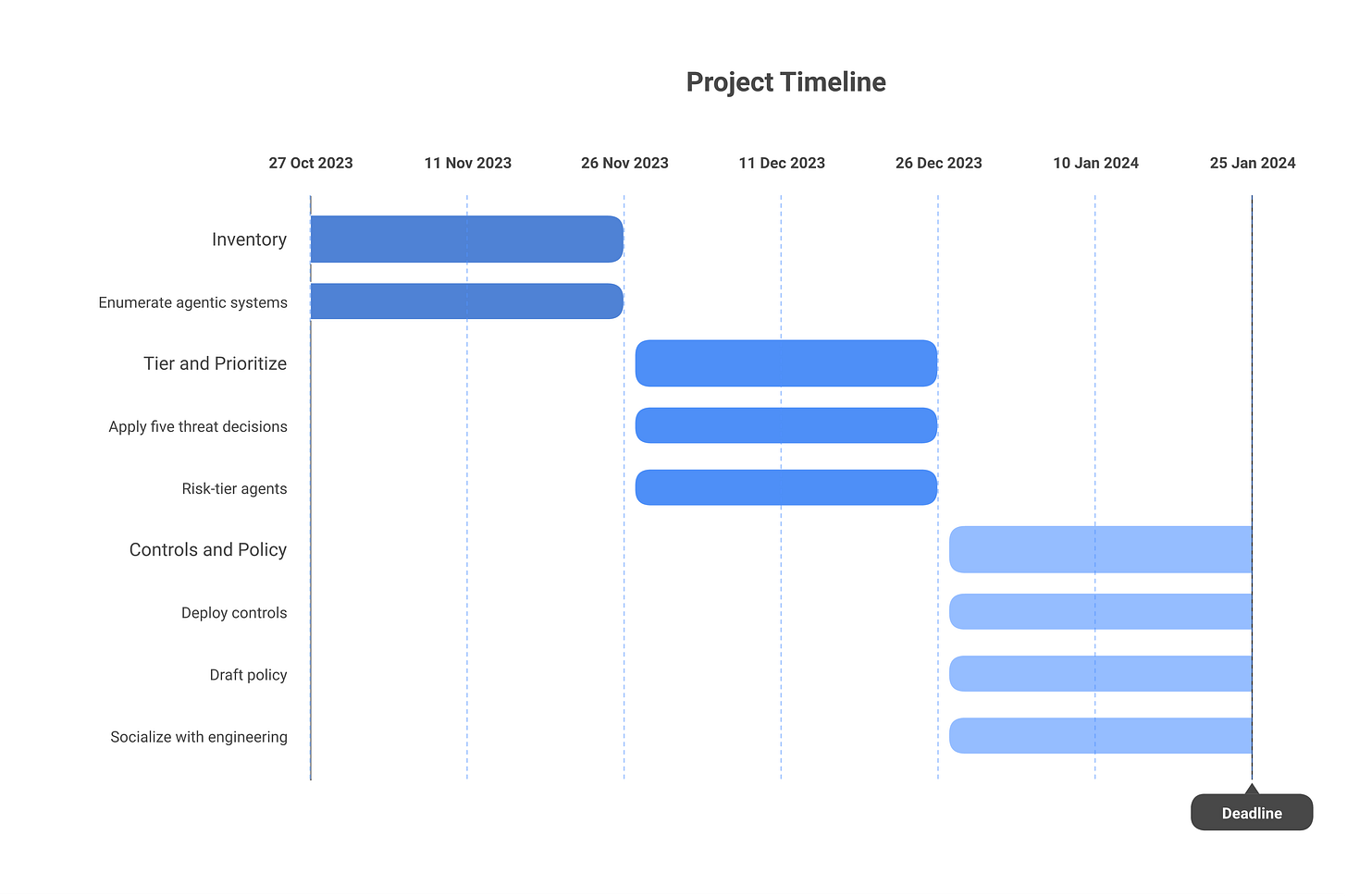

A 90-day plan for CISOs starting today

Days 1–30: Inventory. You cannot secure what you haven’t enumerated. Build a list of every agentic system in your estate, both ones you’ve built internally and ones embedded in products your teams use (GitHub Copilot Workspace, Slack AI, Salesforce’s Agentforce, any Microsoft Copilot agent, any internal automation using Claude, GPT, or Gemini with tool-calling). Distinguish agent deployments from generative-AI deployments. Expect the list to be longer than you think.

Days 31–60: Tier and prioritize. For each agent on the inventory, answer the five threat-model decisions: what it does, what influences its context, what tools have irreversible effects, what it remembers, and who owns incident response. Tier agents into risk levels (low, medium, high) based on blast radius. Focus the remaining time on the high-tier agents.

Days 61–90: Controls and policy. For every high-tier agent, apply the “what works” controls above: human-in-the-loop for irreversible actions, tool-scoping, logging, input boundary enforcement. In parallel, draft the agent policy and socialize it with engineering leadership. By day 90 you should be able to say, with evidence, which agents in your environment are above your risk threshold and what you’re doing about them.

This is not complete. It’s defensible ground. The agentic AI space will keep moving, for CISOs, “defensible ground, updated quarterly” is the winning posture.

Frequently asked questions

What’s the difference between AI security and agentic AI security?

AI security covers the broader set of risks around machine learning systems, model theft, adversarial examples, training data poisoning, bias, privacy leakage from model outputs, and the set of issues that show up when deploying a generative model in an enterprise (prompt injection, jailbreaking, DLP). Agentic AI security is a subset: the risks that arise specifically because the AI system takes actions via tools in a loop, rather than just returning text. The loop is the difference. Controls that work for generative AI often don’t transfer, because the architecture is different.

How is agentic AI governance different from generative AI governance?

Generative AI governance is largely about human-AI interaction: what people are allowed to paste into a chatbot, how outputs are disclosed, which vendors are approved. Agentic governance is about agent behavior and lifecycle: who can build agents, what tools they can access, how they’re logged, how they’re retired. Most enterprises still operate only under generative governance and have not yet written agent-specific policies.

Which frameworks apply to agentic AI?

Three frameworks are load-bearing. NIST AI RMF provides the risk-management structure. ISO/IEC 42001 is the first operational AI management system standard and the cleanest audit target. The EU AI Act is the only hard-law regime applicable globally in practice; for CISO-relevant obligations, see our EU AI Act compliance guide. MITRE ATLAS and OWASP LLM Top 10 are tactical, useful for threat modeling and red teaming, not for program-level governance.

What’s the single biggest agentic AI risk for enterprises today?

Unscoped tool access combined with autonomous execution of irreversible actions. An agent that can do too much, with too little oversight, is the category that produces breach headlines. Most other risks, prompt injection, data leakage, hallucination, become actionable incidents only when an insufficiently-scoped agent turns them into real-world effects.

How much should a mid-size company budget for agentic AI security?

Treat it the way you’d treat a new control domain, not a product category. Rough rule of thumb: 10–15% of the AI tooling budget should go to AI-specific security (red teaming, logging infrastructure, a dedicated policy lead, evaluation tooling). For a company spending $2M/year on AI tooling, that’s $200–300K. Most of that money should go to people and processes, not products. The most common mistake is to spend heavily on an AI security tool that overlaps with your SIEM rather than investing in the policy, inventory, and threat-modeling work that has to happen first.

If this was useful, subscribe to Cyberwow for the CISO-only filter on AI security, no vendor pitches, no news cycle, just decision-oriented analysis.