Building an AI Security Program: From Policy to Implementation

A program-level blueprint - policy, discovery, controls, and board reporting. The five-stage maturity model behind programs actually working in 2026.

Every large enterprise I talk to has an AI policy. None of them have an AI security program. The policy sits in SharePoint, gets signed off by legal and compliance, and then nothing happens. Engineering ships agents. Finance runs GenAI copilots. HR tries AI resume screening. The policy says “you must have a governance review before deploying AI,” and none of those teams did one. Three years of policy work produced zero impact.

This is the gap. A policy is a document that describes intent. A program is the operating model, the people, the processes, the controls, and the feedback loops that turn intent into reality. Building an AI security program means moving from “we have rules” to “we have visibility, consistent decision-making, and measurable risk reduction.” This post is a blueprint for that move. I’ll walk you through the maturity curve, the organizational choices you have to make (and most teams get wrong), how to start inventory and discovery from scratch, the translation problem that kills most programs, the tooling landscape in 2026, and how to report AI risk to your board in terms they care about.

Why most AI policies are shelfware

An AI policy typically has three sections. First: what data can go into AI systems. Second: how to disclose AI use in customer deliverables. Third: which tools require IT approval. These are sensible rules. Almost none of them are enforced.

The reason is not that teams are rebels. It’s that policies lack operational infrastructure. A policy is a rule. A program is the mechanism that makes a rule actually apply to real decisions in real time. No program, and the policy becomes fiction almost immediately.

Here’s what actually happens: a policy says “ChatGPT use requires prior approval.” Six months later you do an audit and find 47 teams using ChatGPT. When you ask why, the answer is: “We never knew we had to ask. There was no process to ask. We didn’t realize it needed approval.” The policy was there. The operational surface was not.

Building a program fixes this. It means embedding the policy into a workflow, a tool, a role, a standing meeting. It means having a person whose job is to answer “does this AI deployment need review, and if so, what does review look like?” It means discovery running every 90 days so you know what’s actually happening. It means controls that prevent circumvention (or at minimum, make circumvention detectable). And it means reporting, to leadership, that shows not just what the program is supposed to do but what it’s actually doing.

Most teams skip this because it looks like overhead. It is. It’s also the difference between a policy that matters and a policy that was money to the consultant who wrote it.

The five stages of AI security program maturity

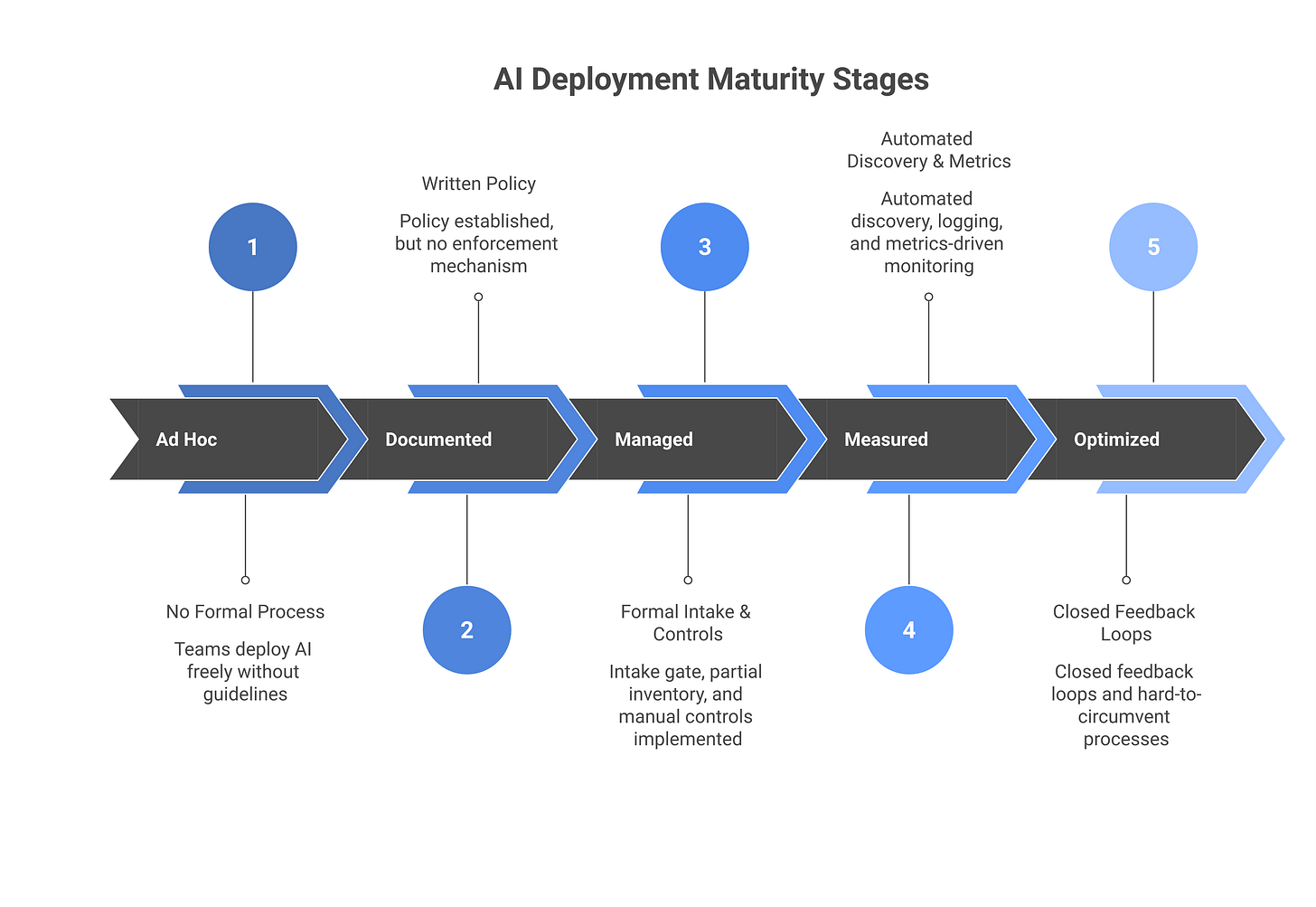

Maturity levels help you assess where you are and what move comes next. I’ve sorted programs into five stages based on what I’ve seen across 15+ enterprise deployments.

Stage 1: Ad hoc. You have no formal AI security process. Teams deploy AI systems when they decide to. There’s no inventory, no approval gate, no controls. The only thing preventing disaster is luck and the fact that your teams haven’t tried anything risky yet. Many organizations are here and don’t know it.

Stage 2: Documented. You have written policy. You’ve assigned responsibility to someone, usually a CISO deputy or a GRC analyst. There’s a process document somewhere that describes how teams should request AI deployments. Compliance sign-off is a gate. The problem: the process has no enforcement mechanism. If a team goes around it, you find out in an audit, if at all. You’re in this stage if your policy is current but your inventory is incomplete.

Stage 3: Managed. You have a formal intake process with enforcement. New AI deployments go through a review gate before they go into production. You have a partial inventory of systems. You have a person or small team that owns the program day-to-day. You know about most of what’s happening, though not all. You’re capable of saying “no” to an unsafe deployment. The gap: your controls are still mostly manual and preventative. You don’t yet have automated discovery or continuous monitoring.

Stage 4: Measured. You have automated discovery running continuously. You have logging and monitoring of AI systems in production. You can see, with data, whether the program is reducing risk or just adding process. You have incident response playbooks for AI-related issues. Your team owns the full lifecycle: intake, deployment, monitoring, retirement. You report metrics to the board. This is the stage where an AI security program starts actually working.

Stage 5: Optimized. You have closed the feedback loop. Incident data feeds back into the policy. Deployment patterns feed back into the controls. You’re shipping controls faster than teams find workarounds. Your program has reached the point where it’s expensive to circumvent than to comply. This is rare and is the goal.

Most mature programs in 2026 are between Stage 3 and Stage 4. A few Fortune 500s have reached Stage 4. Nobody’s at 5 yet.

Who owns AI security: CISO, CIO, or Chief AI Officer?

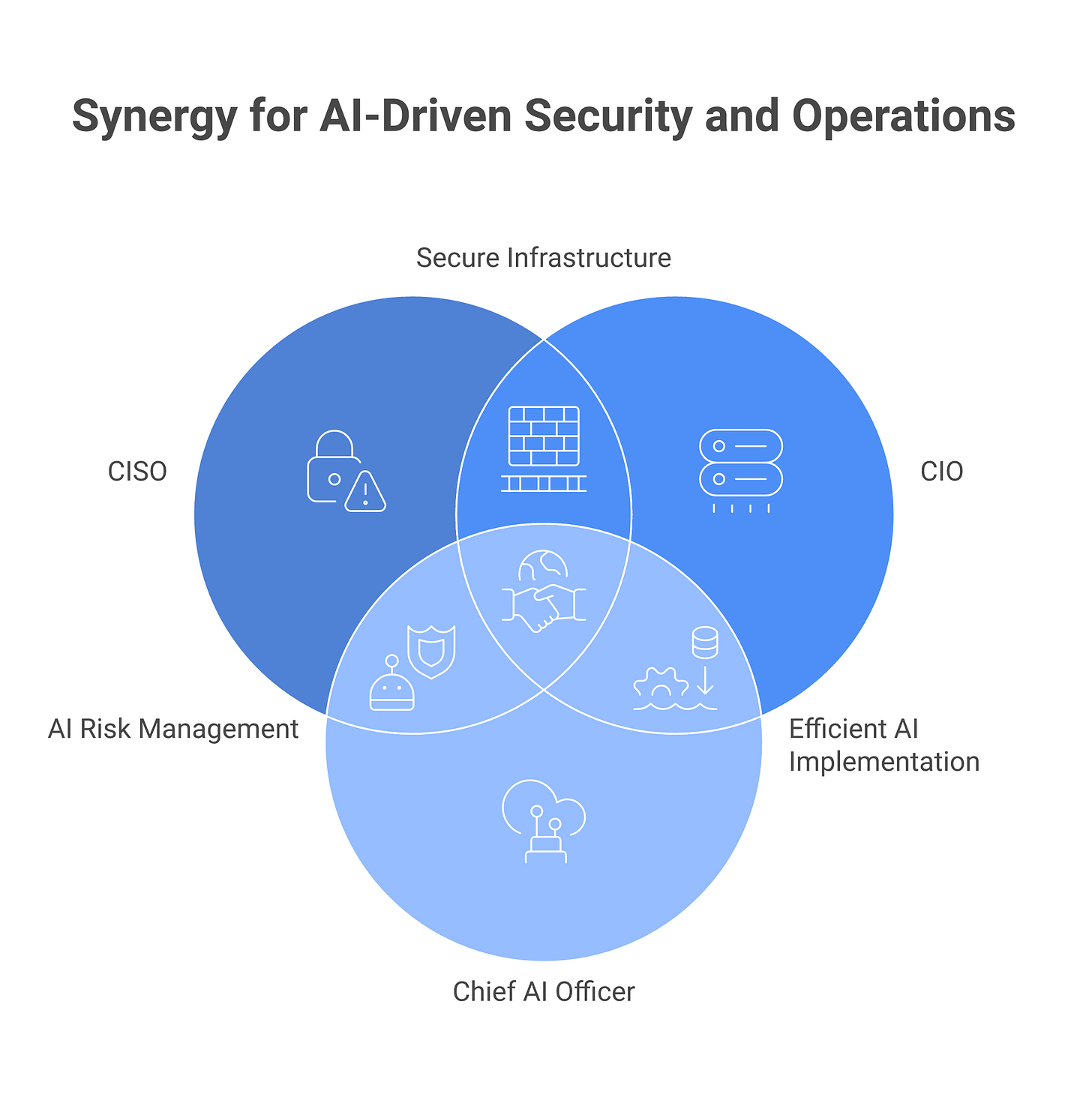

This question comes up in every org-chart redesign. The answer has cost me client relationships because I’m about to tell you the thing nobody wants to hear: you need all three, and they need to coordinate, and most orgs make this harder than it has to be.

Here’s the responsibility map:

The CISO owns the risk. If an AI system breaches, or leaks data, or gets compromised, the CISO is accountable. The CISO needs final sign-off on high-risk AI deployments. The CISO owns the threat model, the control framework, and the incident response plan. This can’t be delegated.

The CIO or Chief Technology Officer owns the operational surface. The CIO knows the tools, the infrastructure, the data flows. The CIO knows which systems can connect to which data sources, what network access looks like, what the backup and disaster-recovery story is. The CISO’s controls don’t work if the CIO isn’t involved in translating them to technical reality. For agentic systems, this is especially true - see our agentic AI security guide for how the CIO role expands.

The Chief AI Officer (if you have one) owns the velocity. The Chief AI Officer’s job is to unblock teams that want to deploy AI, to maintain standards, to drive adoption. If the CISO and CAO aren’t aligned, one of two things happens: either AI deployments slow to a crawl (CISO wins, company loses), or risk gets dismissed (CAO wins, company loses later). The CAO needs to be in the room, negotiating tradeoffs, not circling back after decisions are made.

The common mistake is to give ownership to one person and call it solved. That never works. What works is explicit coordination, clear ownership boundaries, and a decision-making framework that includes all three perspectives.

If you don’t have a Chief AI Officer yet, the CISO and CIO need to own this jointly. One of them leads (usually the CISO for risk-critical decisions, the CIO for operational ones), but decisions get made together.

Building the AI inventory: the step everyone skips

You cannot secure what you have not enumerated. Yet most organizations starting an AI security program skip inventory and jump straight to policy. This is the wrong priority order.

Here’s why inventory comes first: a policy that covers the AI systems you don’t know about is worth zero. An inventory tells you the true scope of the problem. It tells you what’s high-risk, what’s benign, what you didn’t know about. Inventory feeds everything else: the control baseline, the prioritization, the board narrative.

An AI inventory has three dimensions:

First, the systems dimension. Every AI system the organization uses or builds. This includes: GenAI tools your teams use (ChatGPT, Claude, Gemini), custom LLM applications your engineering team built, AI features embedded in purchased software (Salesforce Agentforce, GitHub Copilot, Microsoft Copilot), agentic systems and automation.

Second, the data dimension. For each system, what data can flow in and out? Is it production customer data, anonymized data, training data, logs? Is it flowing to third parties or staying internal? What’s the classification? Most inventory-taking misses this because it requires cross-team coordination with data governance, and that’s annoying.

Third, the ownership dimension. Who owns the system? Who makes deployment decisions? Who’s responsible if something goes wrong? Without this, you can’t make prioritization decisions later.

Most teams approach inventory reactively: they ask teams to report their AI use. Compliance sends an email. Teams fill out a form. You get 30% response rate and 200% false positives. This is the approach that fails.

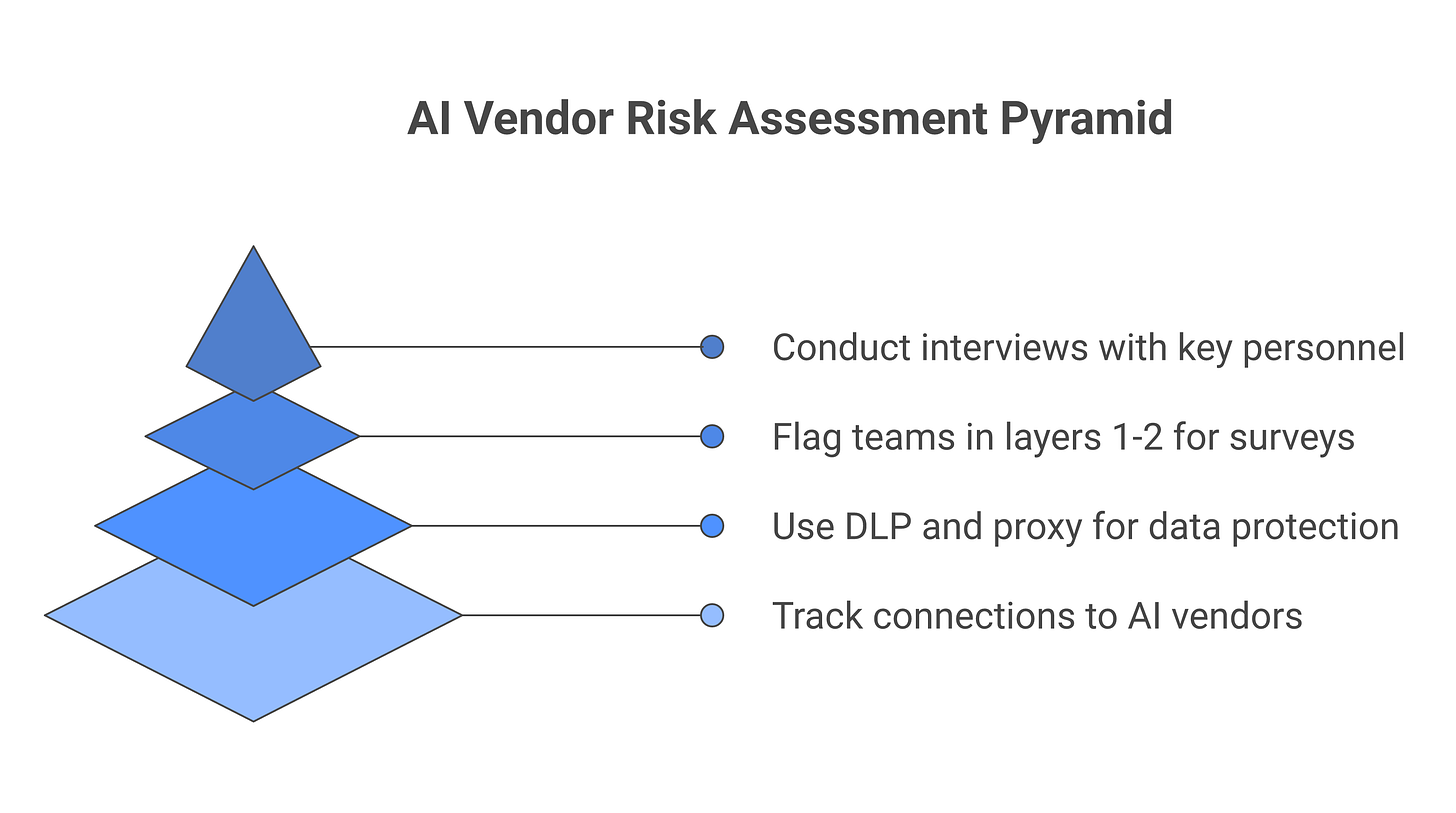

A better approach is active discovery, layered:

Start with network discovery. Monitor your network for outbound connections to known AI vendors (OpenAI, Anthropic, Google, Mistral, etc.). Log them for 30 days and correlate by source. This is cheap and gives you a baseline of shadow AI that your teams are using.

Add security-tool signals. Your DLP, your SaaS management tool, your proxy, your EDR, all have AI use signals. Collect these into a single inventory and deduplicate.

Then send a survey, but target it. Ask only the teams that showed up in network or security signals whether they’re using AI, what for, and what data touches it. Response rate will be 80%+.

Finally, conduct spot interviews with engineering, finance, and legal to catch what network discovery missed. Ask: “What AI systems have you shipped in the last 90 days that you use internally or ship to customers?” You’ll find 20% of the systems this way.

The result of 90 days of this work is a credible inventory. Not perfect, but credible. And credible is enough to start.

Policy to standards to controls: the translation problem

Many organizations write a policy and then immediately jump to tooling. They buy an AI red-teaming tool, or an AI DLP, or a discovery platform, and expect it to solve the problem. The policy doesn’t connect to the tool. Teams don’t know what to do with the tool. Risk doesn’t go down.

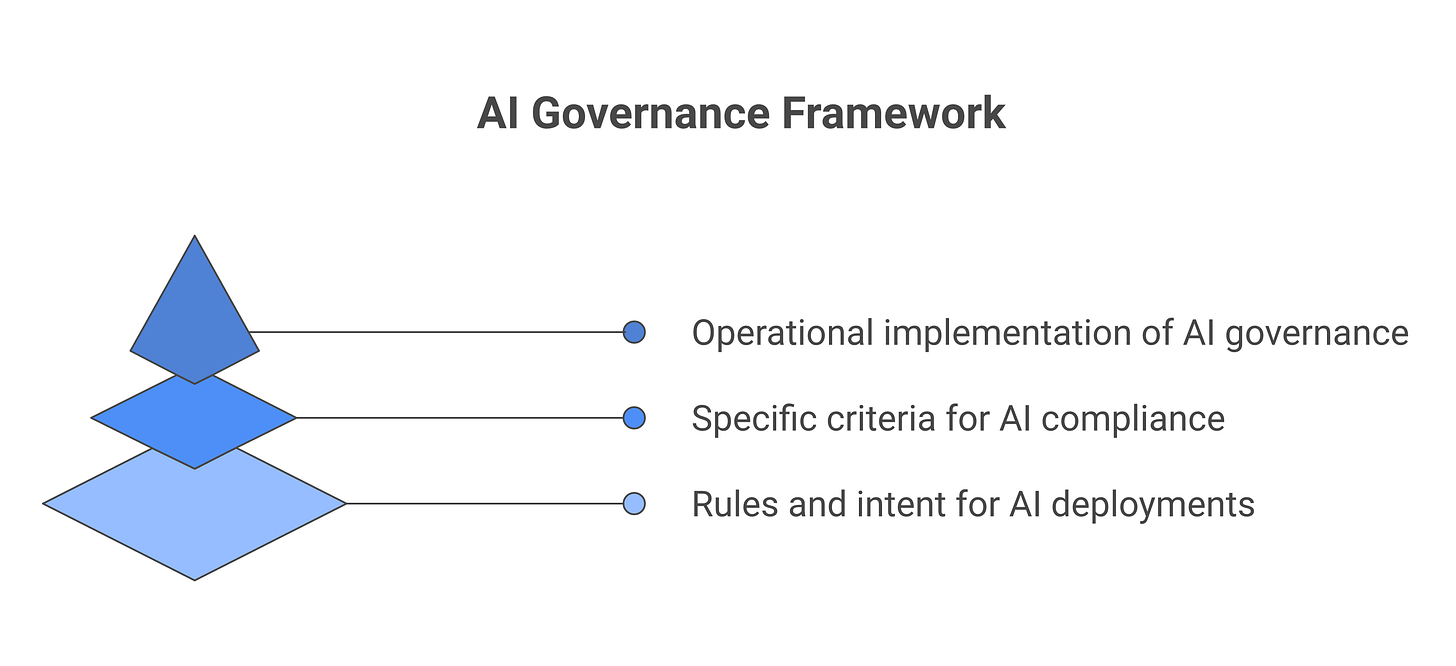

The missing layer is standards. Standards translate policy into specific, testable criteria. Controls are then the implementation of those standards.

Here’s what this looks like in practice:

Policy says: “AI deployments involving sensitive customer data require risk assessment before deployment.”

This is too vague to act on. Who does the assessment? What “sensitive” means? How long does assessment take? Can teams ship if the assessment is in progress? What makes an assessment “adequate?”

Standards say: “Any AI system that processes, trains on, or outputs financial data, health data, or personal identifying information must undergo a data classification review. The review must confirm: (1) the data classification is accurate, (2) the AI provider’s data handling terms are compatible with that classification, (3) there’s a data processing addendum in place if required by law. This review completes before the system goes to production. Approval is CISO sign-off for financial and health data, CIO sign-off for PII.”

This is specific enough to act on. Now the control question becomes operational.

Controls might include: An intake form that auto-flags systems handling sensitive data. A template data processing addendum. A spreadsheet that tracks DPAs by vendor. Quarterly audits that verify all high-risk systems have completed reviews.

The problem is almost nobody has this three-layer structure. They have policy. They have tools. They don’t have standards. The result is Kabuki: tools run, reports get generated, nobody knows if risk is actually lower.

To build this out, start with your top three policies (the ones that apply to highest-risk deployments). For each one, write standards that ask: what specific decisions need to be made? Who makes them? What information do they need? How do they record the decision? Then and only then design the control.

The AI security stack in 2026: tools and categories

The AI security vendor landscape is young and chaotic. Some categories are essential. Some are marketing.

The essential categories:

AI discovery and inventory. You need visibility into shadow AI. Tools like Harmonic, Lasso, and Netskope have built AI-specific discovery into their SaaS management or DLP platforms. These matter most when you’re starting inventory. The honest assessment: commodity SaaS management (Zylo, Flexera) plus a SaaS network proxy (Netskope, Gremlin) gets you 70% of the way there. AI-specific tools add the last 30%. Start with commodity tools, add specialty tools only if the 30% gap is mission-critical.

AI red teaming and evaluation. If you’re running custom LLM applications or agentic systems, quarterly red teaming is essential. Tools matter here, and the market is nascent. Options include: building it in-house with open-source frameworks (Garak, PyRIT), hiring consultants (Anthropic, OpenAI, and dozens of smaller firms), or using platform features (OpenAI’s early red-teaming preview, Anthropic’s classifier). No vendor has cracked this yet. Expect to combine approaches.

LLM-specific DLP. Traditional DLP was built for email. It’s not built for LLM prompts, LLM outputs, or agent-orchestrated data movement. LLM DLP is a new category. Vendors include Lasso, Harmonic, Netskope, and DoControl. The honest take: these tools reduce shadow AI and obvious mistakes. They don’t solve intentional data exfiltration, because a motivated team can move data outside the tool’s view. Think of them as raising the cost of misuse, not preventing determined exfiltration.

AI agent identity and governance. If you’re deploying agents, you need identity. Options range from extending service-account IAM (cheap, limited) to building specialized non-human identity (NHI) infrastructure (expensive, future-proof). Vendors include Aembit, Clutch, Orchid, and Astrix. This is premature for many organizations in early 2026, but worth tracking.

AI security posture management. Vendors like Wiz, Snyk, and Dependabot have started covering “AI security,” but mostly they’re selling existing tools that incidentally touch LLMs. True CSPM for AI systems doesn’t exist yet. When it does, it’ll be a CISO-friendly dashboard showing: what AI systems you have, what risk profile each one has, what controls are in place, which ones are drifting out of policy, which ones need attention this week. This is probably 12 months away for any vendor.

The low-priority category:

“AI-powered” threat detection on AI systems. Using machine learning to detect anomalous agent behavior sounds good in a pitch. In practice, the false-positive rate is too high and the ROI doesn’t justify it. Rules-based detection on well-instrumented logging (timestamp, user, action, result, latency) is more reliable and cheaper. Don’t fall for this category yet.

Most organizations should start with: discovery tool (could be commodity), logging infrastructure (Splunk, Datadog, or similar), and a red-teaming plan (internal or consultant-led). Add specialized tools as specific problems emerge.

How to report AI risk to the board

The board doesn’t want to know about your AI policy. They don’t care about your maturity level. They care about three things: probability of incident, business impact if it happens, and whether you’re on top of it.

AI risk reporting should follow this structure:

Headline (one slide). “We have X AI systems in production. Y of them are high-risk due to data access or autonomous action. We have controls in place for all Y. No breaches or incidents in the past 90 days. We’re monitoring for Z.” Make this one visual, heavily data-driven, honest about what you don’t yet know.

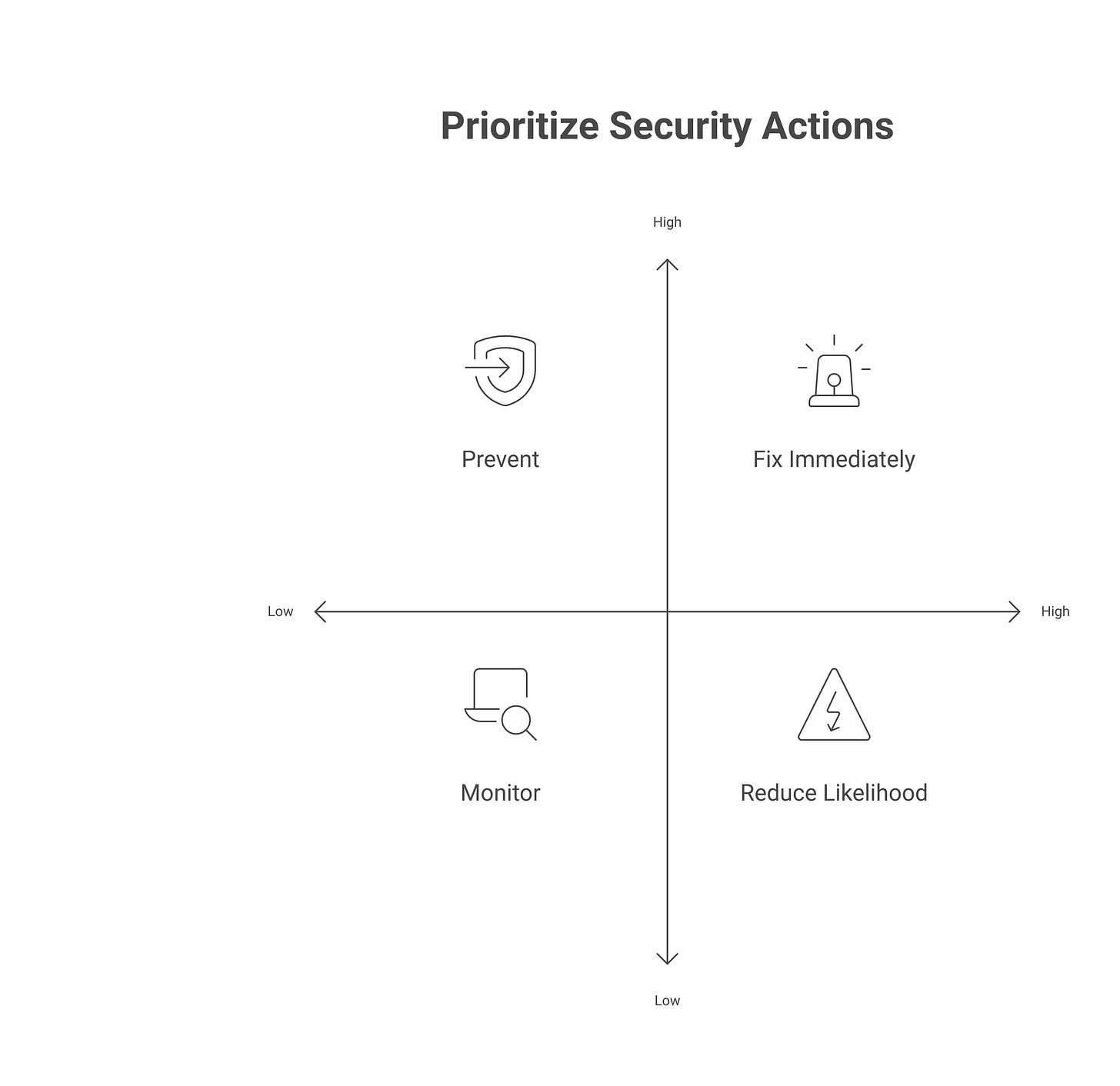

Risk breakdown (one slide). A 2x2 matrix. Axis 1: likelihood of breach (low to high, based on threat surface + controls). Axis 2: business impact if breached (cost, customer impact, regulatory). Plot each high-risk system as a point. This is the frame the board already thinks in.

Control validation (one slide). What evidence do you have that your controls are working? If you run red teaming quarterly, show: “We ran red teams on our three highest-risk systems. We found N issues. We fixed M of them before they became real incidents.” Real data, not claims.

Roadmap (one slide). What’s your program doing this quarter? Focus on the things that reduce the risk in the headline visual. “We’re extending our inventory to include embedded AI in purchased tools” or “We’re deploying AI agent identity controls for our autonomous workflow systems.” Nothing aspirational. Nothing that’s been on the roadmap for six months.

The mistake most organizations make is sending the board a narrative document. CISOs love detailed explanations. Boards skip to the bottom to find the one sentence that tells them whether to worry. Give the board data. Give them the two-sentence version first. Put detail in backup slides for the three board members who care.

The AI security stack in 2026: specific vendors

The vendor landscape in 2026 includes:

AI discovery: Harmonic, Lasso Security (Slack + code), Netskope (web + SaaS), Airtight.

AI governance frameworks: Orchid, Prophet Security (for compliance workflows).

Agentic identity: Aembit, Astrix, Orchid.

Red teaming: Anthropic, OpenAI (limited), DIY with Garak/PyRIT, consultants.

LLM DLP: Lasso, Harmonic, Netskope, DoControl.

The list will shift. New vendors are shipping weekly. The framework matters more than the vendor: focus on the category, evaluate tools against your specific requirement, and avoid buying multiple tools that do the same thing.

Frequently asked questions

Is AI security a CISO or Chief AI Officer responsibility?

Both. The CISO owns risk. The Chief AI Officer owns velocity. You need both perspectives at the table, and you need a decision framework that honors both. If you only have one, you’ll optimize in a way that breaks the other one. For EU-regulated organizations, this coordination becomes even more critical - see EU AI Act compliance for the specific obligations that shape this relationship.

What does a mature AI security program look like in practice?

Stage 4 maturity: you have inventory (automated, updated quarterly). You have clear policies with operational standards. You have controls that prevent most mistakes and detect violations. You have incident response playbooks. You run red teams quarterly on high-risk systems. You report to the board quarterly with data on risk and control effectiveness. You have a person or small team that owns the program full-time. Incident response for AI events is fast and informed.

What’s the first thing to build when starting an AI security program?

Inventory. You cannot prioritize, policy, or control what you haven’t enumerated. Spend 30 days on discovery, build a credible list of AI systems, and then layer standards and controls on top. Policy is almost always premature until you understand what you’re protecting.

How do I report AI risk to the board without getting into the weeds?

Use a single visual: a 2x2 matrix with threat likelihood (x-axis) and business impact (y-axis). Plot each high-risk AI system as a point. One sentence per quadrant explaining what you’re doing about it. Backup slides with detail for the board members who ask.

What’s a realistic 12-month roadmap for a new AI security program?

Months 1-2: Inventory and discovery (active + reactive). Months 3-4: Write standards and map controls. Months 5-6: Deploy automated discovery tool, logging infrastructure. Months 7-8: Run first red teams, establish incident response. Months 9-10: Board reporting, policy socialization, gap-closure planning. Months 11-12: Iterate on controls, train teams, measure effectiveness. By month 12 you should be at Stage 3 maturity: managed, with strong visibility and clear governance. Stage 4 (measured, with automation and data-driven improvement) is a 12-month push from there.

If this was useful, subscribe to Cyberwow for the CISO-only filter on AI security - no vendor pitches, no news cycle, just decision-oriented analysis.